Why we rebuilt how our AI thinks, and what that means for students

.png)

For the last two years, most AI tools in higher education have worked the same way: match a student’s question to the closest answer, return it. Fast, predictable, and increasingly the wrong architecture for what academic AI actually needs to do.

The problem is not the speed. It’s the assumption underneath the design: that the right answer already exists in a document index somewhere, and all the system needs to do is retrieve it. That works for FAQs. It fails for students, who ask vague questions, layered questions, questions that only make sense with context about their course, their prior conversation, and where they are in the material.

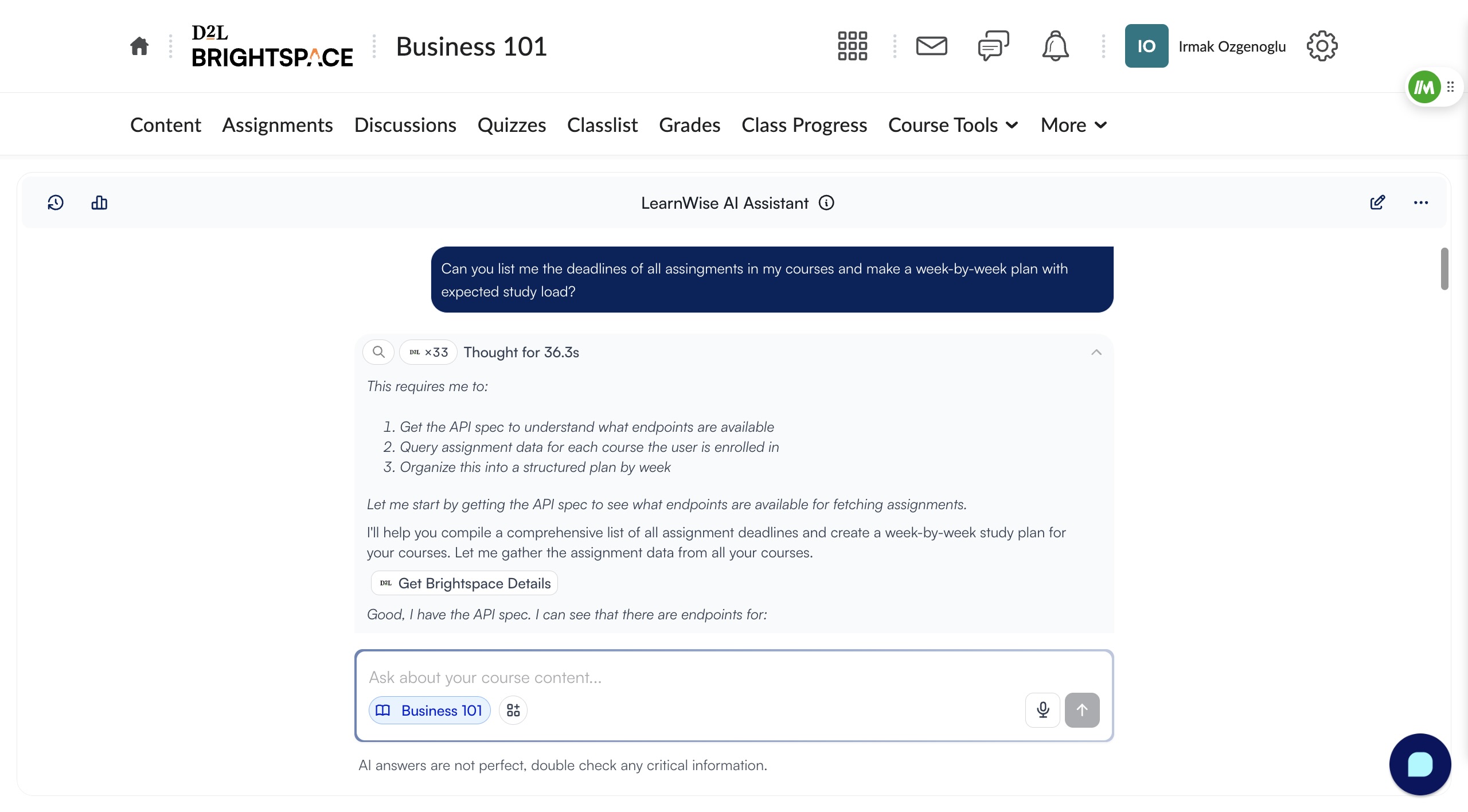

This week, LearnWise rolled out Agentic Search: a fundamental change to how AI Chat and AI Tutor think. Beyond a new interface or another integration, the AI Chat and AI Tutor now have a different cognitive architecture, and have received the first in a series of agentic upgrades coming to the platform.

Why is Agentic Search a game-changer?

When using AI, retrieval usually works when a question is clean and the answer is explicit. A student typing ‘what is the deadline for Assignment 2’ gets a direct match. But most student questions do not look like that.

‘Can you help me with my assignment?’ has no clean match. Neither does ‘I don’t understand the third concept from last week.’ The retrieval model returns something plausible, usually a generic explanation from course material, and the student either accepts a wrong answer or loses trust in the tool entirely.

That second outcome, students silently disengaging, was the failure mode we cared most about. Every time an AI tells a student it cannot help, or returns something that clearly did not understand the question, it pushes them somewhere else. And it makes the AI harder to rely on as a support layer for faculty and administrators, because the answers it produces cannot be trusted consistently.

Therefore, the architecture needed to change. Retrieval was the ceiling - enter agentic search.

How does Agentic Search actually think?

LearnWise now applies iterative reasoning before it responds. Instead of pattern-matching to the most likely answer, it breaks down each question, evaluates what it knows, searches in multiple passes, and builds a response step by step. Here is what that looks like in practice:

- Query breakdown. The question is parsed for intent, not surface-level keywords. ‘I don’t get the third concept’ triggers a lookup of the course’s third unit, not a search for the word ‘concept.’

- Context evaluation. Before searching, the assistant checks what course the student is in, what they have asked in this session, and what they are most likely stuck on. This is the context, what course is this, what did the student ask before, what are they likely confused about, that shapes everything that follows.

- First search pass. The assistant searches deliberately within that context, within the relevant course material and institutional knowledge, not across all available content.

- Second pass if needed - with up to 6 iterations. If the first pass does not surface a clear answer, Agentic Search reformulates and searches again, checking subsections, adjacent topics, related material. The rate of dead-end ‘no sources found’ responses has dropped significantly as a result.

- Response construction with reasoning trace. The answer is built step by step, and you can see every step, the searches it ran, the logic it followed, and why it landed where it did, directly in the chat interface.

- Clarifies ambiguous queries. The AI asks for clarification if the query is ambiguous or otherwise unclear, in order to get better context before answering student questions, helping minimize confusion and resolving queries more efficiently.

Why can you see the AI reasoning, and why does that matter?

Generic AI solutions have a black box problem. In the classroom, a student might cite something the AI told them, but faculty cannot verify where these claims came from. A faculty member cannot verify where it came from. An administrator has no audit layer for policy-sensitive queries. The answer appears, and no one knows how it got there.

Solutions built for educational institutions like LearnWise AI already provided sources with every response, linking to helpful, associated resources to every student query. However, Agentic Search goes one level deeper: every response shows the search steps it took and the logic behind the answer, visible directly in the chat. For faculty, this means reviewing not just what the assistant said but how it reasoned. For administrators, it is an accountability layer for academic integrity boundaries, out-of-scope deflections, and enrollment workflows. For students, it builds the kind of trust that turns an AI tool into something they rely on rather than work around. As a result, this goes beyond a cosmetic addition: it is a structural commitment to how AI should work in an environment where answers have real consequences.

How is it more accurate, and how does it know what a student actually needs?

Two questions come up consistently when institutions evaluate AI chat and tutoring tools. Here are direct answers.

On accuracy: Agentic Search does not guess. When a retrieval-based system is uncertain, it returns the closest plausible match, which can be confidently wrong. Agentic Search evaluates what it found and what is missing. When it cannot give a clear answer, it explains what it found, says what it does not know, and asks for clarification on ambiguous queries. That is a materially different outcome from ‘No sources found’.

On personalization: The assistant does not treat every question as context-free. It evaluates the course the student is enrolled in, their conversation history, and their likely point of confusion before generating a response. A question about ‘the assignment’ means something different in week 2 of an introductory course than in week 9 of an advanced one. Agentic Search holds that context and builds answers against it.

The measurable result: responses are now 63% more concise, averaging 730 characters compared to 1,990 before. More thinking up front means answers that get straight to the point with significantly less filler. When the assistant understands the question before it starts answering, the answer is shorter because it has to be, there is nothing generic left to pad it with.

What about guardrails and institutional policy?

A more capable reasoning system that ignores institutional configuration is worse than a limited one that follows it reliably. This was a non-negotiable design constraint.

Out-of-scope deflection, academic integrity boundaries, and enrollment workflows are all fully upheld, including on edge cases where earlier versions would drift. Every edge case passed in internal testing and with beta institutions. Institutional policy compliance has improved precisely because the reasoning layer respects configuration rather than working around it.

This is the core difference between a general-purpose AI model and one built specifically for higher education. Course-aware, role-aware, and policy-aware reasoning is not something you get by default. It has to be built and maintained for this environment.

Is this the start of something bigger?

Yes. Agentic Search is the first of several agentic capabilities coming to LearnWise. Over the coming weeks, more will roll out across Chat, Tutor, and beyond. The way LearnWise works for students and faculty is fundamentally changing, and this is the foundation it is being built on.

The institutions that will get the most from AI in the next three years are not the ones who adopt the most tools. They are the ones who adopt the right architecture, an AI layer that reasons before it responds, shows its work, and respects the rules the institution has set. Agentic Search is what that looks like in practice.

If you are a lecturer, tutor, or academic support professional, this is what Agentic Search means for you in practice: your students now get answers that are calibrated to their actual course, not generic explanations pulled from somewhere on the internet. When they ask a vague question at 11pm before a deadline, the AI works through what they are likely stuck on rather than returning something unhelpful. And when you want to understand what your students are being told, you can; every answer now shows its reasoning. No more guessing what the AI said or why. You can explore how it works in AI Tutor and AI Campus Support, both updated with Agentic Search today.

When does this go live, and what do you need to do?

After a period of testing and evaluation with several of our partner institutions, Agentic Search is available now. General availability launches on Thursday, April 9th, at which point it becomes the default for all LearnWise AI Chat and AI Tutor deployments.

If you want to enable it before general availability, use the early access option in your dashboard or contact your Customer Success lead.

.png)

.png)

.webp)

%20(1).webp)

%20(1).webp)